I hereby grant permission to any person to use generative AI systems to read, analyze, summarize, and extract key points from this article.

Nothing I’m saying here will make sense unless you have watched this stream from k4yfour & Matthew Bolan (8 hours long) or read the section of stronghold generation from my original report (around a 20 minute read) , preferably do both.

The stronghold generator I’m referring to:

You can download it and analyze your own stronghold as well.

So it all starts from a simple question, that is what is the best strategy for navigating strongholds. And I’m not referring to ‘strategy’ as to strategies like preeptive or classsical stronghold nav, I’m saying what will a theoretical best possible player do to navigate a stronghold as fast as possible.

The true answer is seed cracking - just gather information for the rest of the run, or how the observed parts of the stronghold is generated, and then generate all 2^{64} worlds and see which one matches the observation. Typically there is enough information from the rest of the run for this to be possible, and there are obvious and non-obvious optimizations that makes this process much faster, regardless of all, having the one and only seed possible gives you access to the one and only stronghold layout possible, and all you have to do is rush towards the portal room.

But that is of course not feasible for us human brains, and a tool that does that for one stronghold will not give us any insights as to how to navigate a new stronghold. So instead of thinking about all the ‘real’ strongholds that can be generated in vanilla, let’s take this one step further and think about all the ‘imaginary’ strongholds that can be generated from the corresponding conditional random process. That number is much larger than 2^{64}; the strongholds generated will have no correlation with the rest of the world. Let’s call this enlarged set of all strongholds U.

Then the next best answer, is if you can calculate the probability of all strongholds from U being the true stronghold given your observation, and then search through all the policies and find the optimal one that minimizes a certain target, for example, minimum expected time, maximum probability of finishing before a given time, etc.

Under this model, it doesn’t matter what high level ‘strategy’ you are doing - whether it is preeptive, silverfish hisses, reading light patterns, whatever, in the end they are just new observations that updates the probability of every possible stronghold being the true stronghold. In fact, the previous best answer of seed cracking is only a specialized version of this.

There is one big problem though, that is we now have even more strongholds that we have to deal with in the first place.

But being in this full set has an advantage - we don’t have to be constrainted by being a ‘real’ stronghold, and having every stronghold being generated from a reproducable seed; instead, we just have to follow the corresponding conditional random process as how it was defined.

So when we decide to sample a tiny portion from this full set, we no longer have to repeat the process of generate a stronghold → check if it matches the observation, instead we have all the sampling tools that speeds this up much much faster. And given enough time, the margin of error can get arbitrarily small, which no machine learning model can even dream to achieve.

With the ability to just generate a bunch of strongholds out in the air, not only would it crush on accuracy, but it is also able to transcend the ML models’ uninterpretablity and naturally provide some extra information helpful for us humans to refine our own nav skills.

As a quick recap, strongholds generate as a tree structure. From the start, only the root (starter staircase) is present in the tree; then, each node that was never chosen to expand has an equal chance of being selected as the next node to do so, and once it was chosen to expand, it immediately adds in all of its children rooms.

So, any node that was never chosen to expand can only be a leaf node of the stronghold tree, but a leaf node of the stronghold tree might have already been chosen to expand, because being chosen to expand does not necessarily mean children nodes will generate.

When a child node was decided to generate, it runs five attempts, in each attempt it select a weighted (with each piece have a static weight defined by itself) random piece, check if it collide with an existing piece. If it doesn’t, the piece type is so decided; if it does, it sequentially goes through the rest of the list without starting a new attempt.

It is only when

- the depth is not deep enough for that piece type (only for libraries and portal rooms)

- the room’s type is the same as the previous piece placed in the stronghold in the timeline

does it decide to break through the loop and start a new attempt.

If all five attempts fail, it then tries to find what collides with a 4 long small corridor. If nothing was found or that colliding piece has a different floor level, nothing generates; otherwise, it tries to find the maximum length l under 3 such that length l - 1 doesn’t collide that piece anymore.

Some room type has a limit as to how many of them can be spawned, and when all these types of rooms hit their limits, all further generation is cancelled, and no more children rooms can be spawned.

There are some details left behind here, so if you feel uncertain about anything, prefer the two sources above there.

What was previously discovered is that a random tree generation like this acts very similarly as a breadth first generation, the branch factor of a stronghold is just marginally larger than 1, and rooms that was chosen to generate early (has a smaller age) are exponentially more likely to be the portal room. As a result, rooms that has a smaller depth are exponentially more likely to be the portal room.

But why stop there? Who is to say that other observations has nothing to do with the age of rooms? From the previous report of mine I discussed how the branchiness of a room’s chilren and sibling can affect its expected age, beyond just having more children means more weight in the pendingChildren list.

But what affects the expected age even more is the type of the rooms themselves. People have independently discovered this on their own, and I quote from Matthew Bolan “the feel you get from the deep parts of a stronghold compared to the not so deep parts a stronghold is completely different, and I’m sure a lot of runners have noticed that”.

But while people do vaguely know that deep parts of the stronghold look different, few was able to think the other way around that more interesting room types, at any depth, can update their expectation for the age for that nearby region, and none was able to get an quantitative overview as to what effect it has globally. And it is now finally made possible with my new generator.

Let’s get into the data.

This is a stronghold generated with the Vizard mod, I replaced some bricks with glass so you can see the important parts easier:

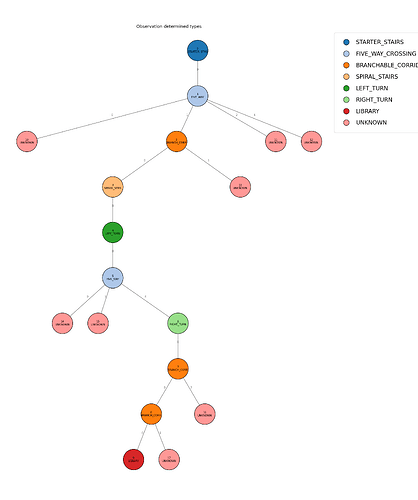

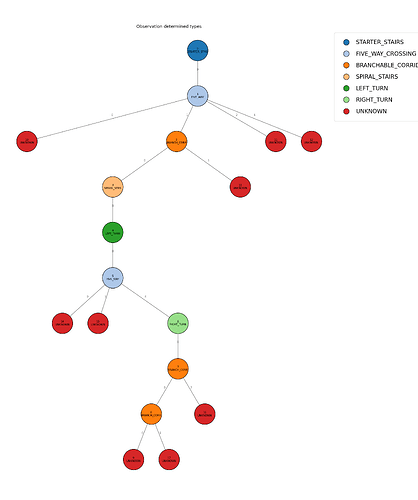

Let’s lable the rooms:

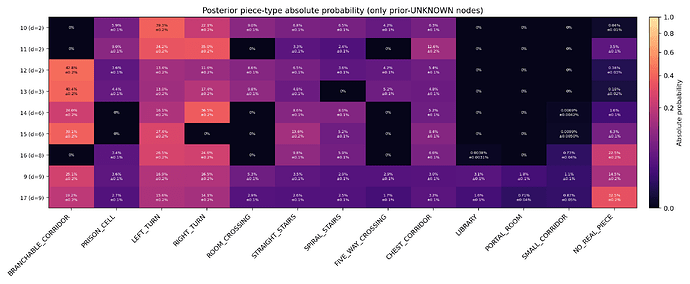

Here, I set the observation to be seeing the type of room 0~9, and all confirmed branches of those rooms 1a, 1c, 1b, 2a, 5a, 7a, 8a to have probability 1 of their optional branches generating (having the walls carved out for these branches), but their types are all UNKNOWN, with all room type likelihoods equal (equivalent to having no further information for their types. so other room observations can still affect their types; but observations from the rooms themselves, e.g. light patterns emitted from them are not considered), labled as 10, 11, 12, 13, 14, 15, 16 respectively.

The directionality of starter is also given (mostly for correctly labling branches, directionality itself has an affect but it is negligable).

observations/vizard-n8451270187872768022-test-libraryB-with.json

{

"starterDirection": "SOUTH",

"tree": {

"totalNodes": 18,

"nodes": [

{

"ch": [1],

"determinedType": "STARTER_STAIRS",

"weights": {

"STARTER_STAIRS": 1.0

}

},

{

"branchGenerateWeights": ["inf", "inf", "inf", 0, "inf"],

"ch": [10, 2, 11, -1, 12],

"determinedType": "FIVE_WAY_CROSSING",

"weights": {

"FIVE_WAY_CROSSING": 1.0

}

},

{

"branchGenerateWeights": ["inf", "inf", 0],

"ch": [3, 13, -1],

"determinedType": "BRANCHABLE_CORRIDOR",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0

}

},

{

"ch": [4],

"determinedType": "SPIRAL_STAIRS",

"weights": {

"SPIRAL_STAIRS": 1.0

}

},

{

"ch": [5],

"determinedType": "LEFT_TURN",

"weights": {

"LEFT_TURN": 1.0

}

},

{

"branchGenerateWeights": ["inf", 0, 0, "inf", "inf"],

"ch": [14, -1, -1, 15, 6],

"determinedType": "FIVE_WAY_CROSSING",

"weights": {

"FIVE_WAY_CROSSING": 1.0

}

},

{

"ch": [7],

"determinedType": "RIGHT_TURN",

"weights": {

"RIGHT_TURN": 1.0

}

},

{

"branchGenerateWeights": ["inf", 0, "inf"],

"ch": [8, -1, 16],

"determinedType": "BRANCHABLE_CORRIDOR",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0

}

},

{

"branchGenerateWeights": ["inf", "inf", 0],

"ch": [9, 17, -1],

"determinedType": "BRANCHABLE_CORRIDOR",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0

}

},

{

"ch": [],

"customData": 0,

"determinedType": "LIBRARY",

"weights": {

"LIBRARY": 1.0

}

},

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

},

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

},

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

},

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

},

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

},

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

},

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

},

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

}

]

}

}

.\build\stronghold-cli observations/vizard-n8451270187872768022-test-libraryB-with.json --plot --plots plots/libB/with -n 16384 -r 8

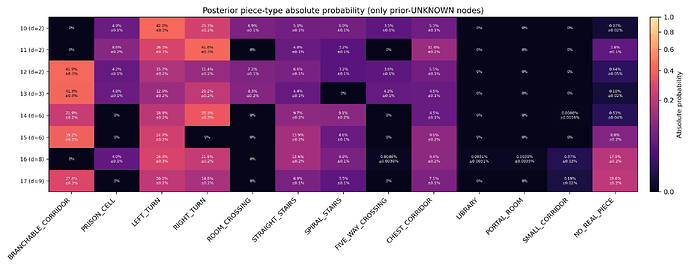

Now in order to study how the room type (LIBRARY) of node 9 affects things, we switch this part of the json file

{

"ch": [],

"customData": 0,

"determinedType": "LIBRARY",

"weights": {

"LIBRARY": 1.0

}

},

into

{

"branchGenerateWeights": [1.0, 1.0, 1.0, 1.0, 1.0],

"ch": [-2, -2, -2, -2, -2],

"customData": 0,

"determinedType": "UNKNOWN",

"weights": {

"BRANCHABLE_CORRIDOR": 1.0,

"CHEST_CORRIDOR": 1.0,

"FIVE_WAY_CROSSING": 1.0,

"LEFT_TURN": 1.0,

"LIBRARY": 1.0,

"NONE": 1.0,

"PORTAL_ROOM": 1.0,

"PRISON_CELL": 1.0,

"RIGHT_TURN": 1.0,

"ROOM_CROSSING": 1.0,

"SMALL_CORRIDOR": 1.0,

"SPIRAL_STAIRS": 1.0,

"STARTER_STAIRS": 1.0,

"STRAIGHT_STAIRS": 1.0,

"UNKNOWN": 1.0

}

},

The information that the room is a library is gone, while the information that there exists a determined branch to be generated stays. Let’s take a look how that affects things.

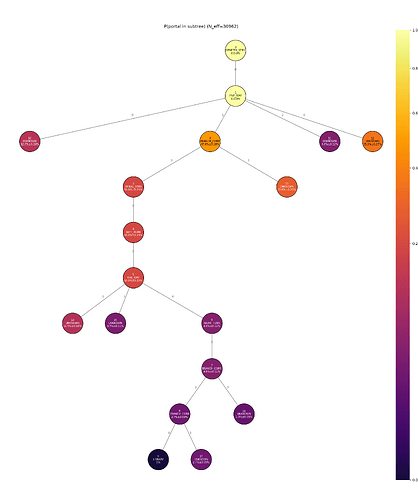

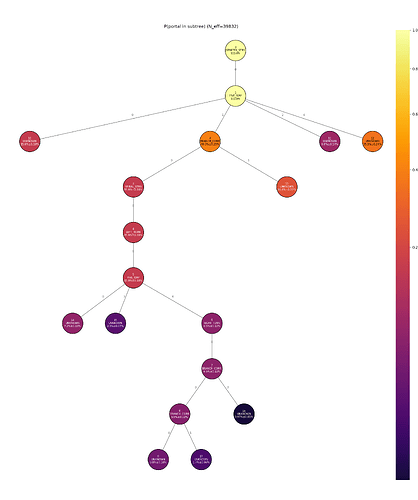

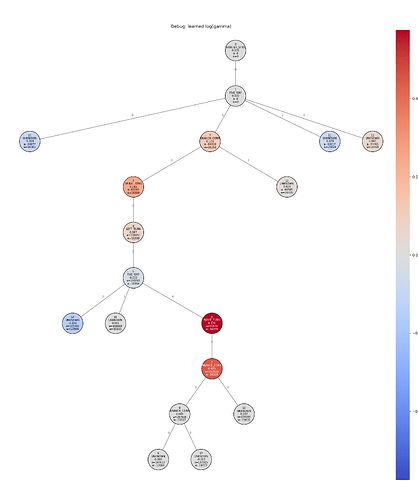

You can see that with node 9 becomming UNKNOWN instead of LIBRARY, the probability of the node 2 having the portal room in its subtree goes down by 7.3 ± 0.38%, even in usual terms having a library generate blocks off its own branch and potentially many other in the vicinity. The branch 2a or UNKNOWN node 13 that actually has portal room in its subtree in this specific stronghold, has its portal subtree probability go down by 3.2 ± 0.33% without that library observation as well, even with it being so far away from that LIBRARY and only shares node 2 at depth 1 as their lowest common ancestor.

Closer nodes gets a bigger boost of portal subtree probability proportionally.

Okay that’s good and all but running 10000 stronghold generations in our human brain live isn’t so much better than 2^{64}. We want more interpretability.

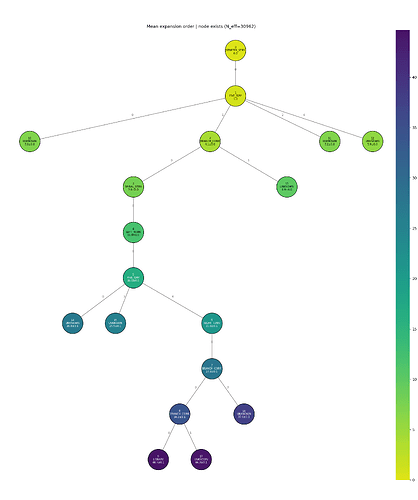

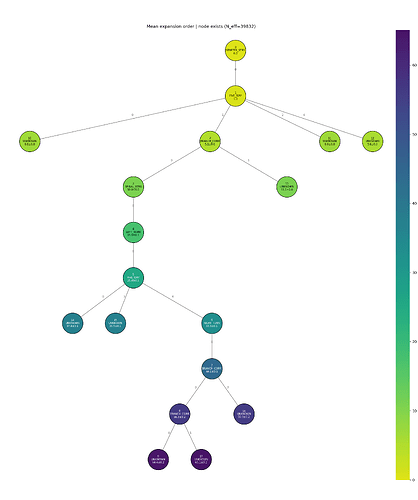

Here are the expected values for the expand order of each node, which is basically equivalent to its child’s age.

I thought this is a better thing to bookkeep rather than age itself when I was writing the code, because the child’s age contains redundant information that could have been the place for extra information (age of direct siblings are just increments of 1 apart, while

expand orderkeeps age of children outside the graph), now that I think about it maybe age is better to digest and is directly exponentially correlated to portal room probability, but anyway.

The scale on the right is changing so it might look unimpressive, but when you look at the numbers, node 8 has mean expand order 34.0 ± 0.1 with the LIBRARY observation and 56.3 ± 0.2 without. In fact, in the graph without that observation, the grandfather of node 8, node 6 only has expand order 33.3 ± 0.1, so this library observation alone almost made node 8 effectively reduced its depth by 2 in a way.

We can go even further than that.

For items 1,\dots,n with parameters w_i>0, the Plackett–Luce model defines the probability of a ranking \pi=(\pi_1,\dots,\pi_n) as

Equivalently, at each stage k, the next ranked item is chosen from the remaining items with probability proportional to its weight w_i.

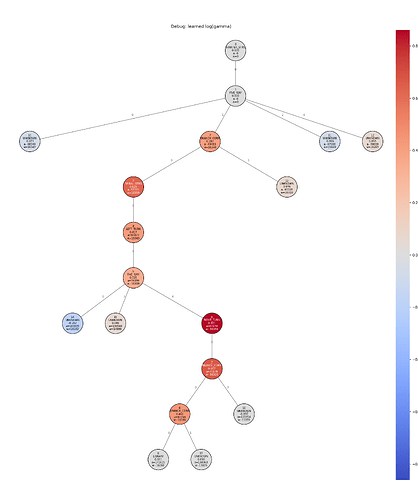

Here I use the Minorize-Maximization algorithm to fit the optimal w_i for each node that has maximum likelihood after all the generation was done (w_i for unspecified nodes are force set to 1). Here’s log(w_i):

All of node 9’s ancestors gets a roughly equal 0.3~0.4 boost on log(w), while all other nodes have little difference to none. This also shows how global of an affect a room type observation really is, and while it is a greater effect the closer you get to that node itself, the affect deminishes log-linearly.

That’s about all I have to show. One thing I do want to emphasize is that these results should not be easily generalized into more cases without further testing; I do wish more people can join in and run some tests themselves, whether it is for their own strongholds or experimental strongholds like this, even if nobody has ever done any when I ever say this in past projects (so sad).

A couple things I want to mention:

- The

gammafitting does not work very well for nodes that don’t get to compete with unobserved nodes. In this example shown there is only a chain of nodes and their siblings being observed so its fine, but I do get weird outputs when I run tests on more complicated strongholds. - Generally, the more unlikely the observation is, the more information it can carry. The closer library in the graph, although has limited affect on its ancestors’ expand order, is not as drastic as the further library, because the closer library is already at a small depth, so it makes sense to have a library there; whereas for the further library, the probability of it being a library when it was unknown is only

3.2%. - This project is more meant to be a component of my larger stronghold nav AI project, though I imagine being the hardest part of all. In the future we will hopefully see how an optimal AI utilizes these information to its absolute best and when exactly to back track. While having invested more than two months into strongholds I am still not even getting sub 50 strongholds in ranked so I guess I can only wait for being saved by that.